Unveiling TikTok Moderation: Discoveries And Insights For A Safer Social Media

TikTok moderation refers to the policies and practices employed by the social media platform TikTok to regulate user-generated content. These measures aim to ensure that content on the platform adheres to community guidelines and legal requirements, fostering a safe and appropriate environment for users.

Effective TikTok moderation is crucial for maintaining the platform's integrity, protecting users from harmful or inappropriate content, and complying with regulatory obligations. It involves a combination of automated systems, human moderators, and community reporting to identify, review, and remove content that violates platform policies. By implementing robust moderation practices, TikTok can promote a positive and responsible online environment for its vast user base.

The following section will delve into the key aspects of TikTok moderation, including its underlying principles, the role of artificial intelligence and human moderators, and the challenges and controversies surrounding content regulation on social media platforms.

TikTok Moderation

Effective moderation is crucial for TikTok's success as a social media platform. Here are ten essential aspects that contribute to the platform's ongoing efforts to ensure a safe and appropriate user experience:

- Community Guidelines: Clear rules and expectations for user-generated content.

- Automated Detection: AI-powered tools to identify potential violations.

- Human Review: Trained moderators to assess flagged content and make final decisions.

- Transparency and Accountability: Clear processes for reporting and appealing moderation decisions.

- Age Verification: Measures to restrict access to age-inappropriate content.

- Cultural Sensitivity: Consideration of diverse cultural norms and perspectives in moderation practices.

- Legal Compliance: Adherence to local laws and regulations governing online content.

- User Education: Initiatives to inform users about platform policies and responsible online behavior.

- Collaboration with Experts: Engagement with external experts to enhance moderation strategies.

- Continuous Improvement: Ongoing evaluation and refinement of moderation practices to keep pace with evolving user behavior and content trends.

These aspects are interconnected and interdependent, forming a comprehensive framework for TikTok moderation. They enable the platform to strike a balance between protecting users from harmful content while preserving freedom of expression and creativity. By continuously improving its moderation practices, TikTok aims to foster a positive and responsible online environment for its global user base.

Community Guidelines

In the context of TikTok moderation, community guidelines serve as the foundation for regulating user-generated content and ensuring a safe and appropriate platform environment. These guidelines outline acceptable and unacceptable behaviors, providing users with clear expectations for their contributions to the platform.

- Defining Boundaries: Community guidelines establish clear boundaries for user behavior, prohibiting content that incites violence, promotes hate speech, or violates copyright laws. By defining these boundaries, TikTok creates a predictable and consistent environment for users, reducing uncertainty and promoting responsible content creation.

- Protecting Vulnerable Users: Community guidelines play a crucial role in protecting vulnerable users, particularly children and teenagers, from harmful content. By prohibiting content that exploits, abuses, or endangers minors, TikTok creates a safer online space for these users.

- Maintaining Platform Integrity: Clear community guidelines help maintain the integrity of the TikTok platform by preventing the spread of misinformation, spam, and other disruptive content. This ensures that users can access and engage with high-quality, authentic content.

- Fostering a Positive Community: Community guidelines promote a positive and respectful online community by encouraging users to engage in constructive and meaningful interactions. By prohibiting bullying, harassment, and other forms of harmful behavior, TikTok fosters a welcoming and inclusive environment for all users.

In summary, community guidelines are an essential component of TikTok moderation, providing clear rules and expectations for user-generated content. They define boundaries, protect vulnerable users, maintain platform integrity, and foster a positive community, contributing to a safe and enjoyable online experience for all users.

Automated Detection

In the realm of TikTok moderation, automated detection plays a critical role in identifying and flagging potential violations of community guidelines. AI-powered tools are employed to scan vast amounts of user-generated content, analyzing it for indicators of harmful or inappropriate behavior. This automated detection process is essential for maintaining a safe and positive platform environment.

The integration of AI in TikTok moderation offers several key advantages. First, it enhances efficiency and scalability. AI algorithms can process immense volumes of content quickly and consistently, enabling the platform to proactively identify potential violations that might otherwise go unnoticed. Second, AI tools can analyze content nuances that may be difficult for human moderators to detect, such as hidden patterns, suggestive language, or manipulated imagery. This comprehensive analysis helps TikTok to address emerging trends and sophisticated attempts to bypass moderation filters.

Furthermore, automated detection is crucial for ensuring a consistent and unbiased moderation process. By relying on objective criteria and algorithms, AI tools minimize the risk of human biases or errors, promoting fairness and transparency in content moderation. This impartial approach builds trust among users and contributes to a sense of predictability and accountability within the TikTok community.

In summary, automated detection, powered by AI, is an indispensable component of TikTok moderation. It enables the platform to efficiently and effectively identify potential violations of community guidelines, safeguarding users from harmful content while maintaining a consistent and unbiased moderation process.

Human Review

Human review is a crucial component of TikTok moderation, involving trained moderators who assess flagged content and make final decisions on whether it violates community guidelines. This human element plays a vital role in ensuring the accuracy and fairness of the moderation process.

- Contextual Understanding: Human moderators possess the ability to understand the context and nuances of user-generated content, which can be difficult for AI algorithms to grasp. They can assess the intent behind a post, consider cultural factors, and evaluate the overall impact of the content on the platform.

- Complex Decision-Making: Moderators are equipped to make complex decisions, especially in cases where the violation is not clear-cut. They can weigh the potential harm caused by the content against the user's freedom of expression, considering factors such as the severity of the violation, the user's intent, and the potential impact on the community.

- Bias Mitigation: Human moderators help mitigate potential biases that may arise from automated detection systems. They can provide a more balanced perspective, ensuring that content is not removed solely based on algorithmic decisions.

- User Trust and Transparency: The involvement of human moderators increases user trust in the moderation process, as it demonstrates that decisions are not made solely by algorithms. Transparency reports and clear communication about moderation decisions help build user confidence in the platform's commitment to fairness and accountability.

In summary, human review is an essential aspect of TikTok moderation. Trained moderators bring contextual understanding, complex decision-making abilities, and bias mitigation to the moderation process. Their involvement enhances the accuracy and fairness of content moderation, building user trust and transparency, and ultimately contributing to a safe and positive platform environment.

Transparency and Accountability

Transparency and accountability are fundamental pillars of TikTok moderation, ensuring that the community can trust in the fairness and consistency of content moderation practices. Clear processes for reporting and appealing moderation decisions empower users and promote a sense of justice and recourse within the platform.

- User Reporting: TikTok provides users with easy-to-use reporting tools to flag content that violates community guidelines. This allows users to actively participate in maintaining a safe and appropriate platform environment by bringing potential violations to the attention of moderators.

- Moderation Transparency: TikTok publishes detailed community guidelines and moderation policies, ensuring that users are aware of the standards expected of content on the platform. Additionally, the platform provides transparency reports that disclose the number and types of content removed, offering insights into moderation practices.

- Appeals Process: Users have the right to appeal moderation decisions if they believe their content was removed in error. TikTok has established a clear appeals process that allows users to submit their case for review by a dedicated team of moderators.

- Community Feedback: TikTok values user feedback and regularly engages with the community to gather insights and improve moderation practices. Feedback mechanisms, such as surveys and focus groups, enable users to share their experiences and perspectives, contributing to the platform's ongoing efforts to refine its moderation approach.

These facets of transparency and accountability foster trust and confidence in TikTok moderation. By empowering users to report violations, providing clear guidelines, establishing an appeals process, and actively seeking community feedback, TikTok demonstrates its commitment to fairness, consistency, and responsiveness. This transparent and accountable approach contributes to a positive and inclusive platform environment where users feel respected and valued.

Age Verification

Age verification is a crucial component of TikTok moderation, encompassing measures designed to restrict access to content that may be harmful or inappropriate for younger users. This facet aligns with TikTok's commitment to protecting children and ensuring a safe and age-appropriate platform experience.

- Identity Verification: TikTok employs various methods to verify users' ages, including age-gated content, requiring government-issued identification, and partnering with third-party age verification services. These measures help prevent underage users from accessing age-restricted content.

- Content Filtering: TikTok utilizes algorithms and human moderators to filter out content that is deemed inappropriate for younger audiences. This includes content involving violence, sexual themes, drug use, or other potentially harmful material.

- Parental Controls: TikTok provides parents and guardians with robust parental control tools. These tools allow them to set screen time limits, restrict access to certain content, and monitor their children's online activity.

- Educational Initiatives: TikTok collaborates with child safety organizations and experts to develop educational initiatives that teach children about online safety and responsible social media use.

These multifaceted age verification measures contribute to the overall effectiveness of TikTok moderation. By restricting access to inappropriate content, the platform fosters a safer and more age-appropriate environment for its vast user base, particularly for younger users who may be more vulnerable to online risks.

Cultural Sensitivity

In the context of TikTok moderation, cultural sensitivity plays a crucial role in ensuring that content moderation practices are inclusive, fair, and respectful of diverse cultural backgrounds. TikTok's global reach necessitates a nuanced understanding of cultural norms and values to avoid unintentional censorship or bias.

For instance, humor styles and social conventions can vary significantly across cultures. Content that may be considered offensive in one culture may be acceptable in another. By considering cultural contexts, moderators can make informed decisions that align with the platform's values while respecting cultural diversity.

Moreover, cultural sensitivity fosters trust among users from different backgrounds, promoting a sense of belonging and inclusivity. When users feel that their cultural identities are respected, they are more likely to engage with the platform and contribute to its vibrant community.

In conclusion, cultural sensitivity is an essential component of TikTok moderation. It ensures that content moderation practices are fair, inclusive, and respectful of diverse cultural perspectives. By embracing cultural sensitivity, TikTok creates a welcoming and supportive environment for users from all walks of life.

Legal Compliance

Legal compliance is a cornerstone of TikTok moderation, ensuring that the platform operates within the boundaries of local laws and regulations governing online content. This adherence is crucial for several reasons:

Firstly, legal compliance safeguards TikTok from legal liabilities and penalties. Violating local laws can result in fines, legal action, and reputational damage. By adhering to legal requirements, TikTok demonstrates its commitment to being a responsible corporate citizen and avoids potential legal entanglements.

Secondly, legal compliance fosters trust among users. When users know that TikTok operates within legal frameworks, they are more likely to trust the platform with their personal data and online interactions. This trust is essential for maintaining a thriving and engaged user base.

Thirdly, legal compliance contributes to a more positive and harmonious online environment. By removing illegal content, such as hate speech, violent extremism, and child sexual abuse material, TikTok helps prevent the spread of harmful and dangerous content online. This creates a safer and more enjoyable experience for all users.

In conclusion, legal compliance is an integral part of TikTok moderation. It protects TikTok from legal risks, builds trust among users, and contributes to a more positive online environment. By adhering to local laws and regulations, TikTok demonstrates its commitment to being a responsible platform that respects the legal and ethical boundaries of the communities it serves.

User Education

User education is a vital component of TikTok moderation, encompassing initiatives designed to inform users about platform policies and responsible online behavior. By educating users on these matters, TikTok aims to foster a safer and more positive platform environment.

- Policy Transparency and Accessibility: TikTok makes its platform policies easily accessible and understandable to users. These policies clearly outline acceptable and unacceptable behavior, providing users with a framework for responsible content creation and engagement.

- In-App Tutorials and Resources: TikTok offers in-app tutorials and resources that guide users through the platform's safety features and reporting mechanisms. These resources empower users to protect themselves from harmful content and inappropriate interactions.

- Collaborations with Experts and Organizations: TikTok collaborates with child safety organizations, mental health experts, and law enforcement agencies to develop educational campaigns and resources. These collaborations bring diverse perspectives and expertise to TikTok's user education efforts.

- Community Engagement and Feedback: TikTok actively engages with its user community to gather feedback and insights on user education initiatives. This feedback loop helps TikTok refine its approach and address the evolving needs of its users.

User education initiatives play a crucial role in the effectiveness of TikTok moderation. By equipping users with the knowledge and tools they need to navigate the platform safely and responsibly, TikTok creates a more positive and inclusive online environment for all.

Collaboration with Experts

In the context of TikTok moderation, collaboration with external experts plays a pivotal role in enhancing the platform's ability to address complex and evolving content moderation challenges. By engaging with experts from diverse fields, TikTok gains access to specialized knowledge, experience, and insights that inform and strengthen its moderation strategies.

- Advisory Boards and Councils: TikTok establishes advisory boards and councils comprised of experts in child safety, mental health, law enforcement, and other relevant domains. These experts provide ongoing guidance on policy development, content review practices, and user education initiatives.

- Research Collaborations: TikTok collaborates with academic institutions and research organizations to conduct studies on the impact of online content on users, particularly young and vulnerable populations. Research findings help TikTok refine its moderation strategies based on data-driven insights.

- Expert Consultations: TikTok regularly consults with individual experts on specific content moderation issues. These consultations provide valuable perspectives on emerging trends, best practices, and potential solutions to complex challenges.

- Industry Partnerships: TikTok partners with industry organizations and other social media platforms to share knowledge and develop joint initiatives aimed at combating harmful content online. Collaboration fosters a collective approach to content moderation and promotes industry-wide standards.

Collaboration with experts enhances TikTok moderation in several ways. Firstly, it brings diverse perspectives and specialized knowledge to the moderation process, ensuring that TikTok's policies and practices are informed by the latest research and best practices. Secondly, it fosters a sense of accountability and transparency, as external experts provide independent oversight and feedback on TikTok's moderation efforts. Thirdly, collaboration helps TikTok stay abreast of emerging trends and challenges, enabling it to adapt its moderation strategies proactively.

Continuous Improvement

In the dynamic landscape of social media, continuous improvement is paramount for effective moderation. TikTok recognizes the evolving nature of user behavior and content trends and has implemented a robust framework for ongoing evaluation and refinement of its moderation practices.

- Data-Driven Insights: TikTok leverages data analytics to identify patterns, trends, and areas for improvement in its content moderation efforts. By analyzing user feedback, content reports, and platform usage data, TikTok gains valuable insights into the effectiveness of its moderation strategies.

- Algorithm Refinement: TikTok employs machine learning algorithms to automate content moderation tasks. These algorithms are continuously refined based on data analysis and feedback from human moderators, enabling them to adapt to evolving content patterns and improve detection accuracy.

- Moderator Training and Development: TikTok invests in ongoing training and development programs for its human moderators. Moderators receive regular updates on platform policies, best practices, and emerging content trends to ensure they remain equipped to make informed and consistent moderation decisions.

- Community Engagement: TikTok actively engages with its user community to gather feedback and identify areas for improvement. The platform conducts surveys, focus groups, and collaborates with user representatives to understand their perspectives and address their concerns regarding content moderation.

Through continuous evaluation and refinement, TikTok aims to strike a balance between protecting users from harmful content while preserving freedom of expression. By adapting its moderation practices to keep pace with evolving user behavior and content trends, TikTok fosters a safe and positive platform environment for its vast and diverse user base.

TikTok Moderation FAQs

This section addresses frequently asked questions (FAQs) regarding TikTok moderation policies and practices, providing concise and informative answers to common concerns and misconceptions.

Question 1: What is TikTok moderation?

TikTok moderation refers to the policies, technologies, and human efforts employed by the platform to regulate user-generated content. Its primary objective is to maintain a safe, appropriate, and enjoyable environment for all users.

Question 2: Why is TikTok moderation important?

Effective TikTok moderation is crucial for several reasons. It protects users from exposure to harmful or inappropriate content, ensures compliance with legal and ethical standards, and maintains the platform's reputation and credibility.

Question 3: How does TikTok moderate content?

TikTok utilizes a combination of automated detection systems and human moderators to review user-generated content. Automated systems flag potentially inappropriate content, while human moderators make final decisions based on platform policies and community guidelines.

Question 4: What types of content are prohibited on TikTok?

TikTok prohibits a wide range of content that violates its community guidelines, including hate speech, violence, pornography, illegal activities, and content that poses a risk to minors.

Question 5: How can I report inappropriate content on TikTok?

TikTok provides users with easy-to-use reporting tools to flag inappropriate content. Users can report content by clicking the "Report" button located on individual videos or posts.

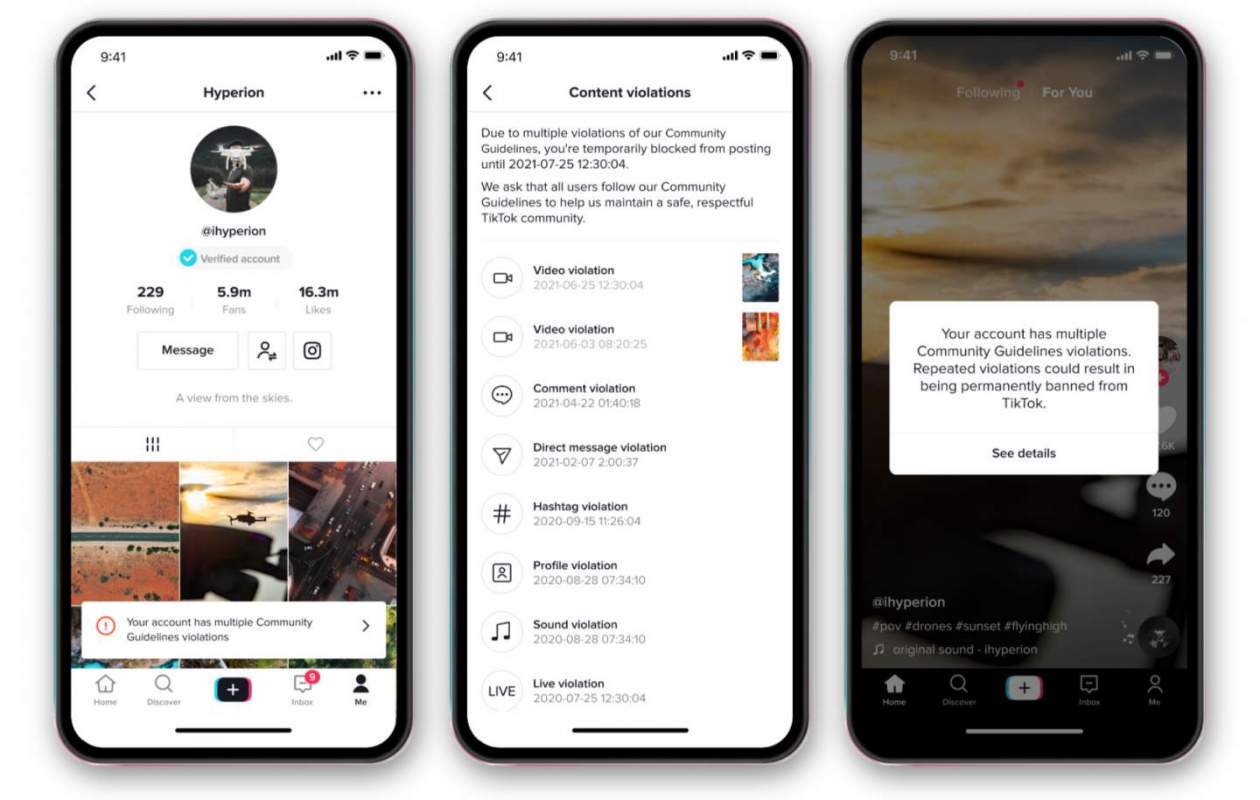

Question 6: What happens if I violate TikTok's community guidelines?

Violating TikTok's community guidelines may result in various consequences, including content removal, account suspension, or even permanent ban. The severity of the consequences depends on the nature of the violation.

Summary: TikTok moderation is a complex and multifaceted process that involves a combination of automated systems and human intervention. It plays a vital role in maintaining a safe and appropriate platform environment for users, protecting them from harmful content and ensuring compliance with legal and ethical standards.

Transition: For more information on TikTok moderation, please refer to the following sections: [Insert links to relevant sections or resources]

TikTok Moderation

TikTok moderation plays a critical role in maintaining a safe and enjoyable platform for all users. By adhering to the following moderation tips, content creators can contribute to a positive and inclusive online environment.

Tip 1: Familiarize Yourself with Community Guidelines:

Thoroughly read and understand TikTok's community guidelines. These guidelines outline the types of content that are prohibited or restricted on the platform, ensuring that your content aligns with the platform's standards.

Tip 2: Prioritize Authenticity and Originality:

Create original content that is unique and engaging. Avoid reposting or imitating others' content, as it may violate intellectual property rights and undermine the platform's integrity.

Tip 3: Respect Copyright Laws:

Be mindful of copyright laws when using copyrighted materials, such as music, images, or videos. Obtain proper permissions or use royalty-free alternatives to avoid copyright infringement claims.

Tip 4: Promote Positive and Uplifting Content:

Use your platform to spread positivity and inspiration. Create content that uplifts others, promotes kindness, and encourages meaningful interactions.

Tip 5: Report Inappropriate Content:

If you encounter inappropriate or offensive content, report it to TikTok using the designated reporting tools. By reporting such content, you help maintain the platform's safety and contribute to a more positive online experience for all.

Summary: By following these moderation tips, content creators can contribute to a thriving and responsible TikTok community. By prioritizing authenticity, respecting intellectual property, promoting positivity, and reporting inappropriate content, you can help create a safe and enjoyable platform for all users.

Transition: For further insights into TikTok moderation, explore the following resources: [Insert links to relevant sections or resources]

TikTok Moderation

This comprehensive exploration of TikTok moderation has shed light on the multifaceted nature of content regulation on social media platforms. TikTok's commitment to fostering a safe and appropriate environment for its users is evident in the robust measures it has implemented, including community guidelines, automated detection systems, human moderation, and ongoing evaluation and refinement.

As the digital landscape continues to evolve, TikTok moderation will undoubtedly face new challenges and opportunities. The platform's ability to adapt and innovate while balancing the need for content regulation with the preservation of freedom of expression will be crucial in shaping the future of online interactions. By embracing collaboration, transparency, and user education, TikTok can continue to lead the way in creating a responsible and inclusive social media environment.